Table of Contents

Download: MS Word

NCCOOS Data Management

Archive, Process, and Publish

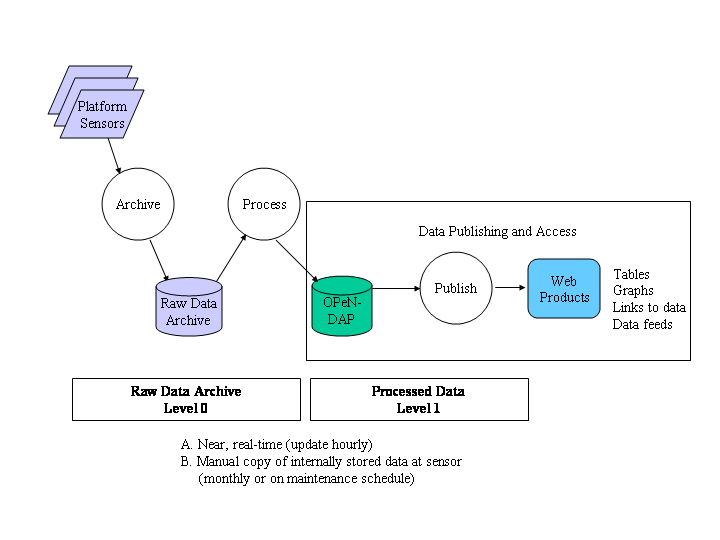

NCCOOS Data Management framework is composed of three main activities: archiving, processing, and publishing. One goal of the infrastructure is to provide data in near, real-time. The data need to be automatically stored or archived, processed, checked for quality, and published to graphics and tables on the Web usually within an hour or two of collection at the sensor. Sometimes, poor real-time communication to a remote platform impedes this timing. Other times, the deployment was designed without remote communication at all. The second goal of the infrastructure is to manually process (or reprocess) data collected once the sensor returns from a deployment (or after a prolonged communication outage).

By maintaining the two levels of data (Level 0 and Level 1), we can always return to the raw data for reprocessing if anything is in question or a new method of derivation or quality check needs to be explored.

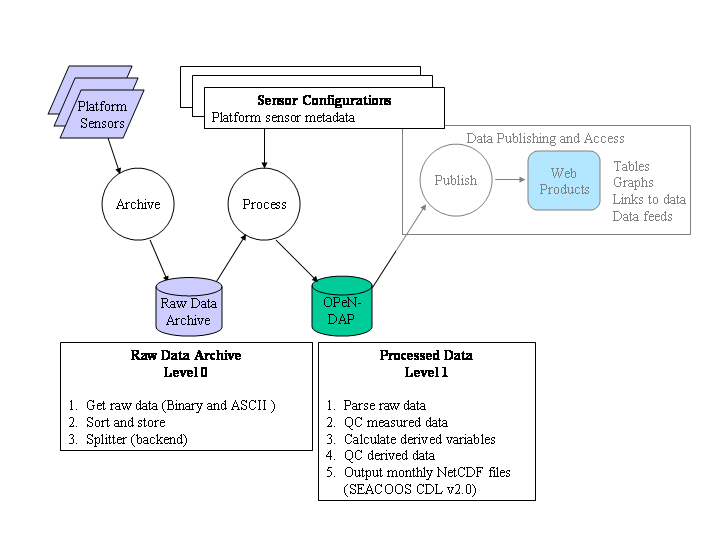

Sensor Configurations

Updated and historical sensor metadata are required for real-time and manual processing (A and B from previous slide). This information includes semantic data like platform location, deployment dates, sensor depths, water depth and site information. It also includes the syntactic information, like data file format for the parser to use. For example, slight variations in the file formats might occur for one sensor when there is an internal upgrade from one deployment to the next. Also, the configuration files can provide placeholders for future quality assurance information such as calibration data or discovered offsets.

Raw Data Archive

Level 0

- Get raw data hourly over available comms or manual copy

- Sort and store raw files by platform, package, and month

- Split any files containing more than one package (e.g. waves and currents)

Processed Data

Level 1

- Parse raw data based on supplied file-format (many different formats and variations can be supported)

- QC measured data (data availability, gross range checks, rate of change)

- Calculate derived variables (This is the where this infrastructure shines. We can have various methods for deriving currents and waves from ADCP raw data and implement new methods without changing the whole structure of data processing.)

- QC derived data and flagging (Climate range check, rate of change. Likewise, we can test different QC methods)

- Output monthly NetCDF files (We have adopted SEACOOS CDL v2.0 for our level 1 dataset output format. NetCDF is a self-describing file format and allows us to store the deployment information along with data processing information with each monthly file.) The Level 1 dataset is made available to the public via OPeNDAP server.)

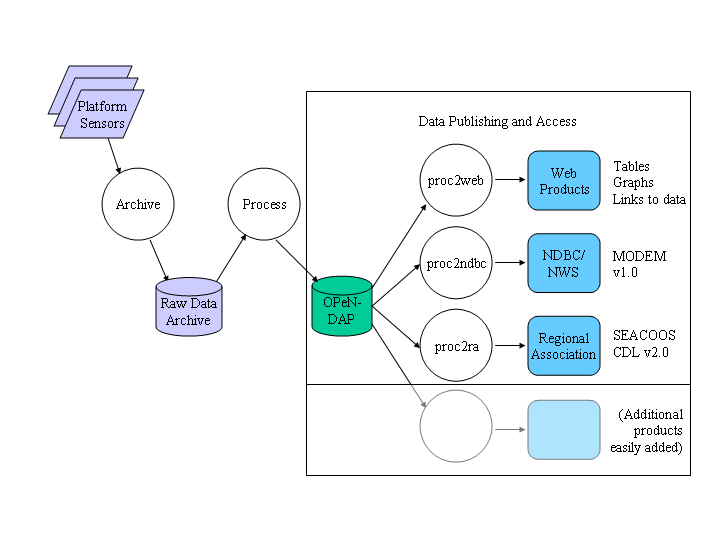

By having an up-to-date Level 1 dataset, we can efficiently publish data to the web through many avenues. By virtue of OPeNDAP server, the data are published. Static time-series plots and maps of various parameters can be generated either via OPeNDAP access or local storage. Stylized tables of the most recent data can be made with links to static plots. The latest data in the Level 1 dataset can be extracted and reformatted for push to the NDBC or Regional Association.

Download: (MS Word)

Attachments

- image001.png (17.1 kB) - added by haines on 03/13/08 15:52:01.

- image003.png (21.1 kB) - added by haines on 03/13/08 15:53:32.

- image005.png (22.9 kB) - added by haines on 03/13/08 15:56:50.

- NCCOOS-DM-data-flow-2007-09.doc (87.5 kB) - added by haines on 03/13/08 16:22:51.